In addition, running notebooks locally for spark development can be limited in functionality and performance, as you are running on a single machine instead of a distributed environment.

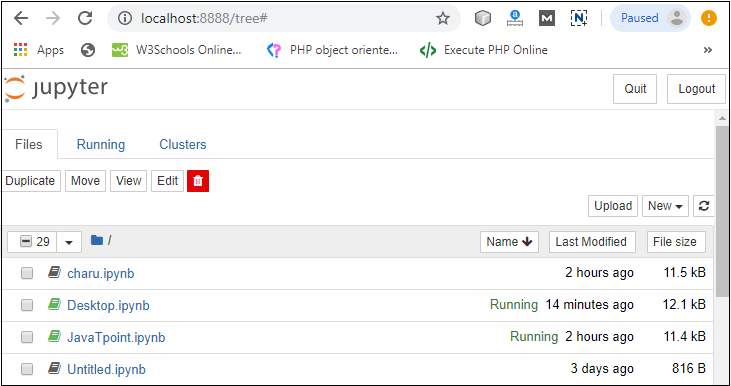

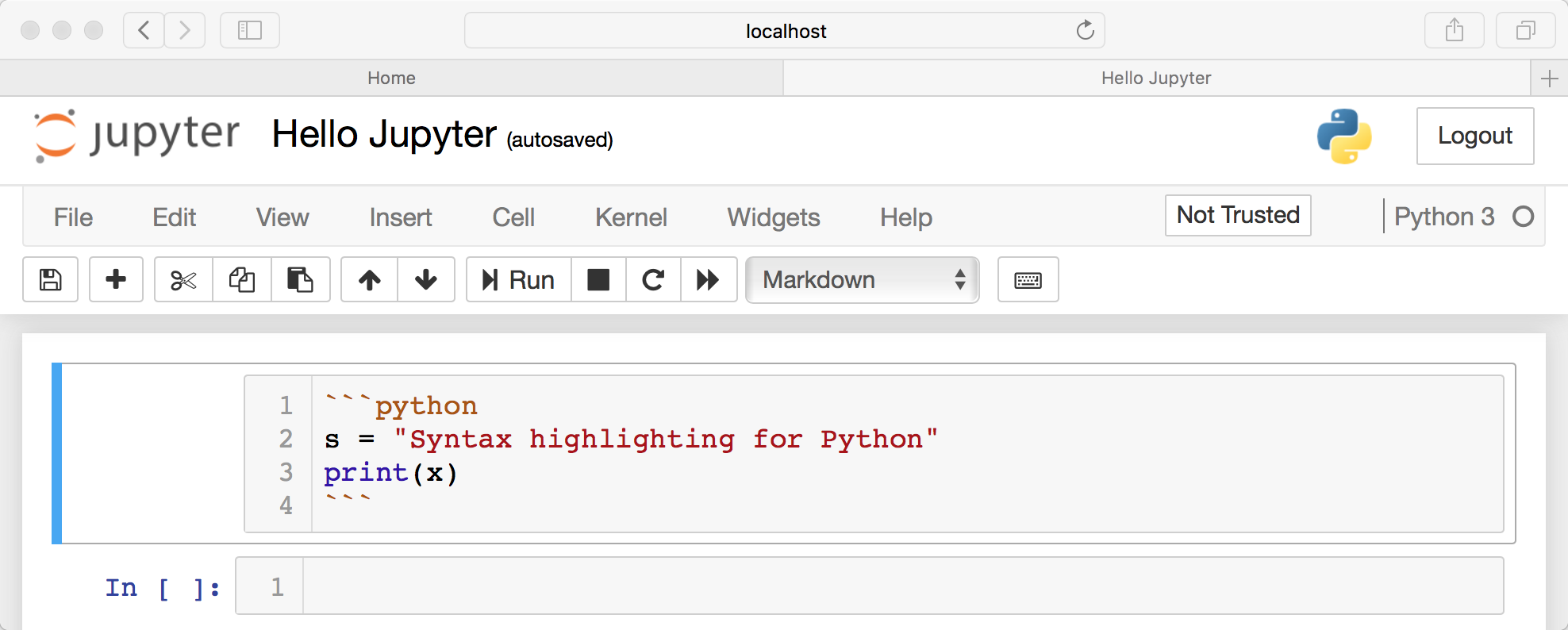

Installing external packages or libraries requires in-depth knowledge of Spark architecture, and it can be especially tricky to configure these environments to be shared by teams. However, configuring environments for Spark development in notebooks can be complicated. It should be no surprise that Jupyter Notebooks are one of the preferred tools of data engineers or data scientists writing Spark applications. No matter the use case, it’s not uncommon for an engineer to open up a Jupyter notebook several times a day. They can be spun up locally using a terminal, or executed in a distributed manner using a cloud hosted environment. Think of notebooks like a developer console or terminal, but with an intuitive UI that allows for efficient iteration, debugging or exploration. It supports programming languages – such as Python, Scala, R – and is largely used for data engineering, data analysis, machine learning, and further interactive, exploratory computing. Jupyter Notebook is a web-based interactive computational environment for creating notebook documents.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed